|

So you're basically saying that if the data is not behind a login and doesn't require you to fill any forms that contain either a checkbox or nearby language that indicates submitting the form constitutes acceptance of the ToU, you'll scrape it, even if the ToU explicitly bans scraping. I'm pretty sure that's in the "customized" ToU I got from LegalZoom. Most websites have anti-scraping boilerplate in their ToU. It is good to know that you're cognizant of these issues, at least. You can read about the differences here: Īs a matter of policy, we don't scrape any site with a ToS with clear anti-scraping language and which forces us to create an account or "constructively agree" as part of the use of the site.Īny user wishing to revoke authorization for anyone using our platform can make an abuse report on our site– we tend to handle these within 24 hours and haven't had a single claim go further than this stage, as we aim to be reasonable and look for a way to provide value to both sides. You have to do this through a "clickwrap" ToS, rather than a "browsewrap". By accepting the terms of service and agreeing against scraping. When the site owner has contacted you and removed your authorization in a written manner, as happened on Craigslist vs 3taps. There are two ways, that I know of, that courts have ruled you can exceed your authorization: When the data scraped is "is publicly available on the Internet, without requiring any login, password, or other individualized grant of access", the Eastern District Court of Virginia in Cvent vs Eventbrite ( ) ruled one could not be deemed to be exceeding unauthorized access. I can help with some pointers to why I think some web scraping isn't illegal… there are of courses some limits to this. I work for Scrapinghub as well and try to understand the law around this. Hi! This is definitely not legal advice, so consult with a lawyer and do your own research if you are thinking of applying this to your own practices.

Glad you enjoyed the article! I'm hoping that more examples of ethical data extraction will start to turn the tide of public perception. Researchers, academics, data scientists, marketers, the list goes on for those who use web scraping daily.

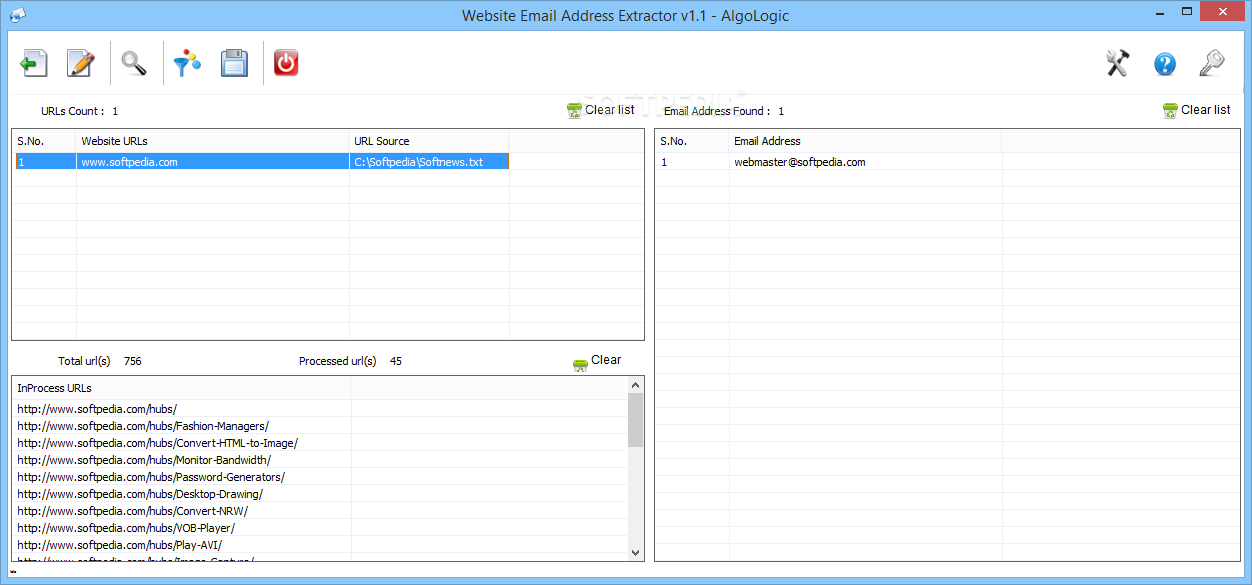

The publicized perception of web scraping is fairly negative, but doesn't take into account the benefits of data used in machine-learning or democratized data extraction (as in the case of this article or for building public service apps like transportation notifications), or the simple realities of competitive pricing and monitoring the activities of resellers. Web scraping is everywhere, even if it's not necessarily spoken openly about or acknowledged. Portia is the visual web scraper for those who are non-technical to technical but don't want to bother with code. Scrapy is for those who want fine-tuned manual control and who have a background in Python. Our tools are Scrapy and Portia, both open source and both free as in beer. em = lect("a/text()").Full disclosure, I work for Scrapinghub.

Rule(SgmlLinkExtractor(allow=("index\d00\.html")), callback="parse_items_2", follow= True), Or since I removed follow=true, it doesn't crawl multiple pages.

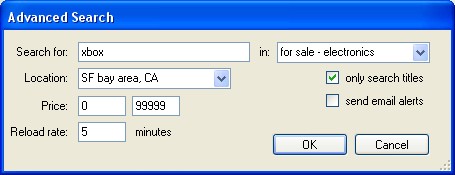

On Tuesday, Febru8:41:39 PM UTC-6, Srikanth Maru wrote:Īs a part of learning, I'm trying to use scrapy to crawl through domain ' ' and my requirement is as follows,įor start_url = parse_items_1 to parse and identify the links and allow only the links with follow.įor links in parse_items_2 to parse and identify the links with xpath index\d00\.htmlīut my rule definition is incorrect, either all the pages crawled from the main domain use only one parse i.e. Rule(SgmlLinkExtractor(allow=('\/npo')), callback="parse_items_1"), Rule(SgmlLinkExtractor(allow=("index\d \.html")), callback="parse_items_2", follow=True), Also, it is generally bad practice to alter spider attributes inside the callbacks since two callbacks can be running at "the same time".įrom import CrawlSpider, Ruleįrom import SgmlLinkExtractorįrom lector import HtmlXPathSelectorįrom ems import CraigslistSampleItemĪllowed_domains = There is no link on the sfbay homepage that satisfies this regex, " index\d00\.html", perhaps you meant, " index\d \.html".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed